Thomas Pellegrini

Assistant Professor in Computer Science

Menu:

Interests

- Deep semi-supervised learning for audio signal applications

- Automatic Speech Recognition, Audio event detection

- Pronunciation assessment for people learning a second language or having speech disorders

Projects

-

ANITI, associate researcher of Fabrice Gamboa, together with Reda Chhaibi, on Fabrice's Chair "AI for physical models with geometric tools"

-

LUDAU, Projet ANR JCJC (ANR-18-CE23-0005-01) Oct. 2018 - Mars 2022, Lightly-supervised and Unsupervised Discovery of Audio Units using Deep Learning (web)

-

REP4SPEECH, Projet Exploratoire Labex AMIES Avril 2018 - Avril 2019, Apprentissage non-supervisé de représentations pour l'analyse de la parole

Demos

Some code for these demos is available: https://github.com/topel-

Feb. 2019. Cosine-similarity penalty to discriminate sound classes in weakly-supervised sound event detection. Accepted to IJCNN 2019, PDF: arxiv, code: github

-

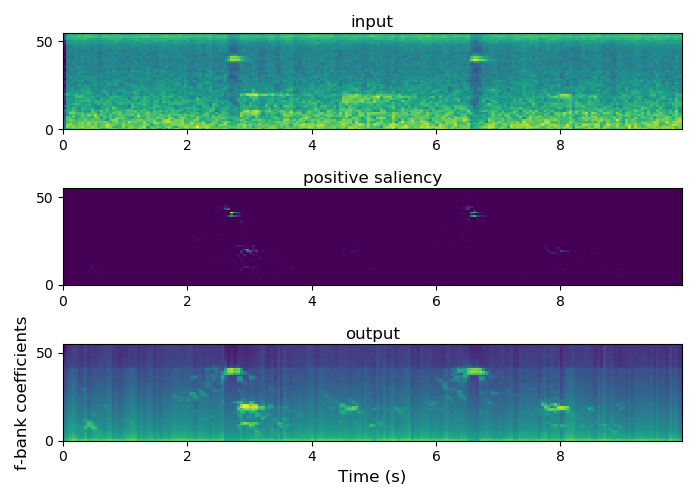

January 2017. Bird Audio Detection 2017 challenge: my solution was based on densely connected CNNs (final AUC score: 88.2%, rank: 3rd/30, official result page). Code available at github repo. Paper at EUSIPCO'17 (PDF). Saliency maps can be extracted to retrieve which frequency components were important to take decisions. The spectrograms of the original audio samples can be multiplied to the saliency maps and then put back to the time domain to synthesize "saliency-masked" audio samples. Audio examples available at bird sounds.

-

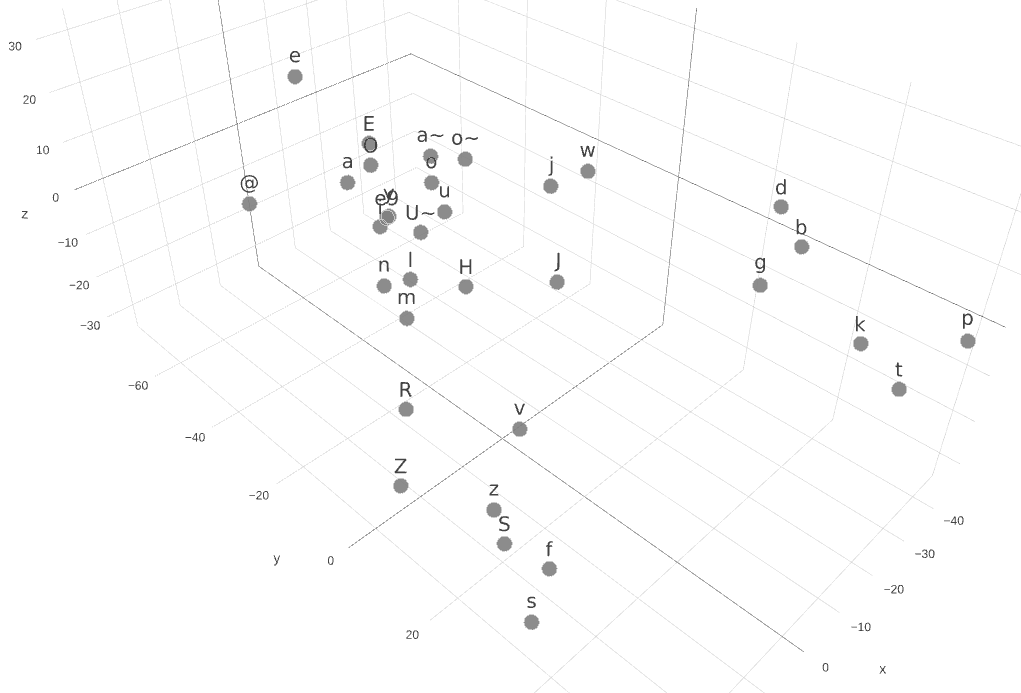

Convolutional neural networks are amazing at extracting their own representations of structured data, such as speech. Here is an illustration showing that phonetic categories, such as voiced plosives, voiceless plosives, fricatives, are inferred by the layers of a network trained for phone recognition for French. Click on the image to get an interactive 3-d representation of PCA applied to activations of the first layer of this CNN. (Paper @ INTERSPEECH 2016)

-

Speaking and eating: demo of a classifier detecting 6 food types and a "no food" type. This task was part of the INTERSPEECH 2015 paralinguistic challenges. The classifier consists of a convolutional neural network with log-Mel spetra as input. Contributor: Valentin Barriere (July 2015).

-

Demo of a gesture recognition prototype based on HMM. Contributors: Baptiste Angles, Patrice Guyot, Christophe Mangou (June 2014).