Unison Choir Detection

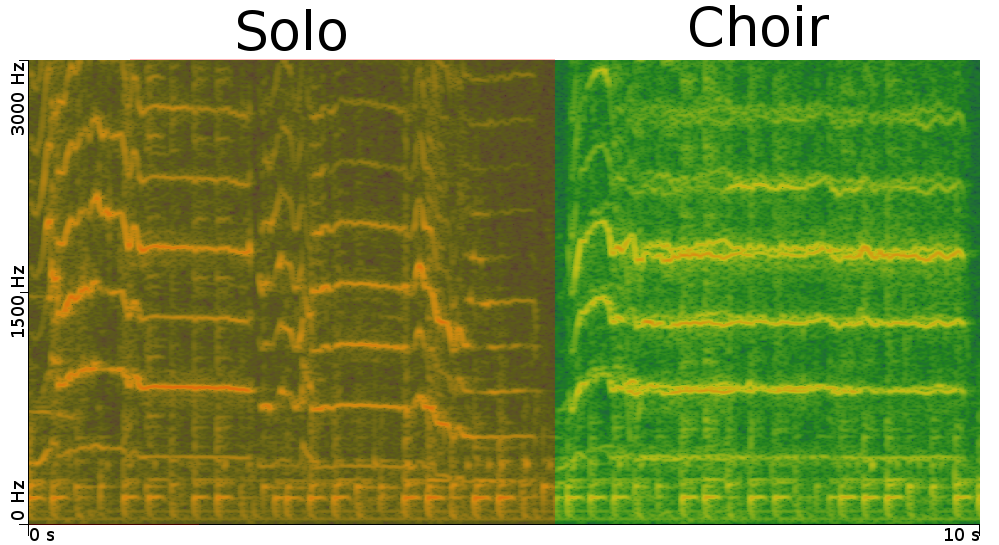

The detection of unison choir is a difficult problem as the different singers aims at singing the same thing at the same time. This leads some algorithm to classify such area as monophonic. However, we can observe little divergence between the harmonics of the different singers as shown in the figure below.

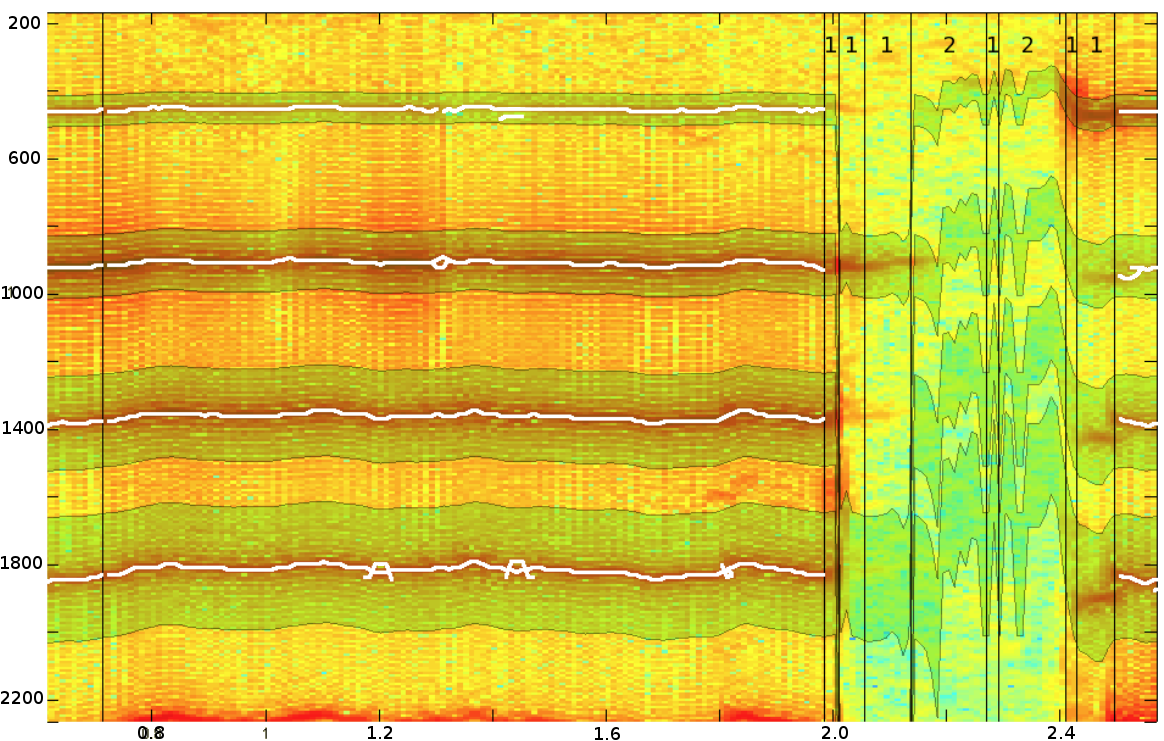

Example of solo and unison choir part. The divergences between the singers is clearly visible in the choir part.

To detect those divergence we analyse specific time frequency area where those divergences are prone to occur.

Time-Frequency restriction

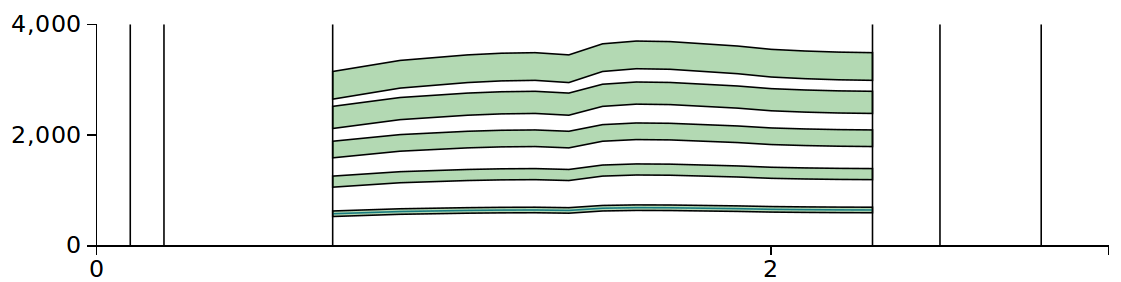

As those divergence phenomenon are more visible in sustained notes, we perform a segmentation of the signal using Forward/Backward divergence [1] and analyse only the longest stable segments.

The unison choir segments are close to monophonic context and therefore, we can use the monophonic fundamental frequency YIN [2] to extract the note sung by the group. We then define frequency bands around the fundamental frequency and the harmonics of increasing width to keep the same information in every band.

Selected bands for analysis

A tracking is then performed on those bands.

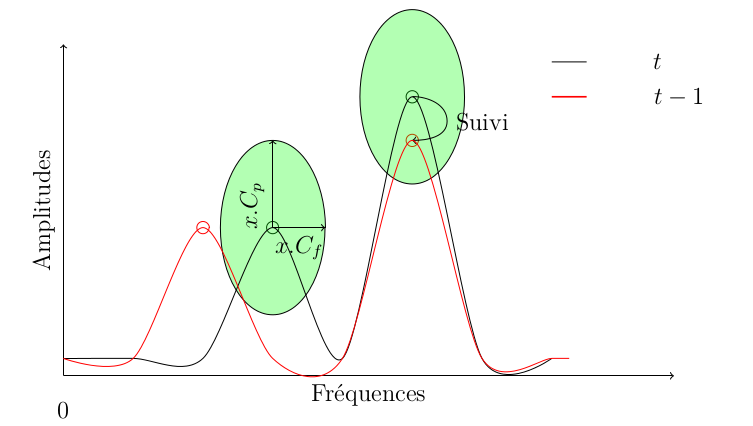

Tracking

The tracking is performed using the technique proposed by Tanigushi [3]. On each band, every peak of the spectrum is selected. The tracking consist in finding for a peak p = () of a frame t if it is the evolution of a peak in frame t-1. To do so, Tanigushi propose a distance to link the peaks. This methods can be seen as looking for peaks in a Amplitude-Frequency neighbourhood around a peak of the previous frame.

The peaks linked follows the evolution of the sources and are called sinusoidal segments. This algorithm has only be modified by allowing multiple successor for a single peak.

Decision

The decision between unison choir and solo is performed by counting the number of multiple sinusoidal segments present at a single time. If multiple trackings are present during enough time, the time segment is labelled as choir.

Trackings on solo (left) and choir (right) excerps. (The frequency axe of the spectro is reversed)

Contributors

- Maxime Le Coz (lecoz@irit.fr)

- Régine André-Obrecht

- Julien Pinquier

Main publications

Maxime Le Coz, Régine André-Obrecht, Julien Pinquier. Feasibility of the Detection of Choirs for Ethnomusicologic Music Indexing (regular paper). In : International Workshop on Content-Based Multimedia Indexing (CBMI 2012), Annecy, France, 27/06/2012-29/06/2012, IEEE, p. 145-148, juin / june 2012. BibTeX

References

[1] Régine André-Obrecht. A new statistical approach for automatic speech segmentation. In : Transactions on Audio, Speech, and Signal Processing, IEEE, Vol. 36 N. 1, p. 29-40, 1988.

[2] Alain de Cheveigné and Hideki Kawahara, YIN, a fundamental frequency estimator for speech and music. In : The Journal of the Acoustical Society of America, vol.111, no. 4, pp. 1917–1930, 2002[3] Taniguchi, Adachi, Okawa, Honda, Shirai. Discrimination of speech, musical instruments and singing voices using the temporal patterns of sinusoidal segments in audio signals. In: Proc. of Interspeech 2005, pp. 589-592, 2005.